Age Verification Is Everywhere Now and People Are Starting to Push Back

Why governments are demanding it, how AI made everything worse, and why companies should think twice

If you’ve been online lately, you’ve probably seen it:

“Upload your ID.”

“Scan your face.”

“Confirm your age to continue.”

Age verification is no longer just a checkbox asking if you are over 18. Governments are pushing for stronger systems. Platforms are rushing to comply. AI is making the stakes feel higher. Brands are quietly supporting it in the background.

But while the goal sounds simple, "protect the kids," the reality is messier.

Why Governments Are Pushing Age Verification So Hard

Politically, protecting children online is one of the easiest arguments to win. No one wants to appear soft on child safety.

In the UK, the Online Safety Act requires platforms hosting adult content to use “robust” age checks. Across the EU, the Digital Services Act pushes companies to take stronger responsibility for protecting minors online.

Lawmakers argue that simple “Yes, I’m 18” buttons do not work; they are not wrong. Kids can lie. They always have. Regulators now want platforms to prove they are doing more than trusting users.

There is also public pressure. News headlines about online grooming, explicit content, or AI-generated abuse push governments to act quickly. Whether the solutions are carefully designed or rushed into law is another story.

AI Made Everything More Complicated

Artificial intelligence has fueled this push.

AI tools can now generate realistic fake images, deepfakes, and explicit content at scale. This includes horrifying cases involving minors. UNICEF has warned that AI-generated sexual abuse material is becoming a serious global issue.

So governments react: “We need stricter controls.”

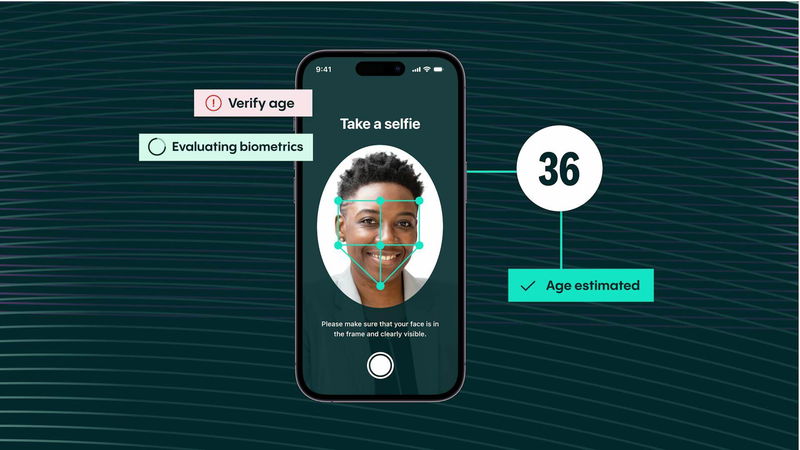

But here is the twist: AI is also being used to verify your age.

Face-scanning tools estimate how old you look. Algorithms analyze your features. Some systems require live selfies. Digital rights groups, like the Electronic Frontier Foundation, have raised serious concerns about privacy, bias, and long-term risks.

AI also creates new surveillance tools; it does not just create new harms.

And those tools do not always work well.

Age-estimation systems misclassify people, especially people of color, trans and non-binary individuals, or those who look younger or older than average. When that happens, real adults are locked out or forced to upload more personal data.

That is not protection; it is friction and risk.

Why Corporations and Brands Are Jumping On Board

Governments are not the only ones driving this.

Companies are adding age verification for three reasons:

1. Legal Fear

No platform wants fines or lawsuits. If laws mandate age checks, companies comply, sometimes in the fastest, most blunt way possible.

2. Advertiser Pressure

Brands do not want their ads appearing next to explicit content. Age gates make platforms look brand safe.

3. Public Image

It is easier to say, “We verify age to protect children,” than to explain the complexity of online safety.

From a PR perspective, age verification sounds responsible. From a technical and ethical perspective, it is not simple.

The Negative Side: Why Companies Should Slow Down

Here’s where the conversation becomes uncomfortable.

Privacy Risks Are Massive

Uploading government IDs or face scans creates a database of incredibly sensitive information. If that data is breached, users face identity theft, harassment, or worse.

And breaches constantly occur.

It Kills Anonymity

The internet has always allowed people to explore sensitive topics—sexual health, identity, and trauma—without revealing who they are.

Mandatory ID checks destroy that layer of privacy, which has a chilling effect, especially for marginalized communities.

Bias and Exclusion

AI age tools are not perfect. Users pay the price for their mistakes.

Getting blocked from a site because an algorithm thinks you are 16 when you are 25 is frustrating. Being forced to upload your passport to prove yourself is invasive.

It Doesn’t Actually Stop Determined Users

Teens will still find ways around these systems. VPNs, fake IDs, or borrowed credentials—the internet adapts fast.

So who carries the burden?

Regular, honest users.

The Positive Side: Why Age Verification Isn’t Completely Evil

To be fair, there are advantages.

It can reduce minors' casual exposure to explicit commercial content.

It gives companies a compliance shield against regulators.

It signals that platforms take child safety seriously.

When done carefully with minimal data collection and strong privacy, age assurance can be one layer of protection.

The problem is when it becomes the only layer or is overly invasive.

What Companies Should Do Instead

If companies must implement age checks, they should:

Avoid storing raw ID documents when possible.

Use privacy-preserving proofs, verifying “over 18” without storing identity.

Keep biometric processing on-device rather than in central databases.

Be transparent about the data collected and how long it is kept.

Combine age controls with better content moderation and reporting systems.

Age verification should be narrow, targeted, and privacy-first, not a mass identity sweep.

The Bigger Picture

Here is the uncomfortable truth:

Age verification is sold as a simple solution to a complex problem.

Governments want to protect children. That is valid.

AI has made harmful content easier to create. That is real.

Brands want safety and compliance. That makes business sense.

But turning the internet into an ID checkpoint carries long-term consequences.

Once face scans and ID uploads normalize, rolling that back becomes very hard.

Protection matters. Privacy does too.

If companies rush into intrusive verification systems without safeguards, they may solve one problem while quietly creating a bigger one.

And that is the part we need to discuss more.

Comments

No comments yet. Be the first to comment!